Introduction

We'll start with an overview of how machine learning models work and how they are used. This may feel basic if you've done statistical modelling or machine learning before. Don't worry, we will progress to building powerful models soon.

Let's go through the following scenario:

Your cousin has made millions of dollars speculating on real estate. He's offered to become business partners with you because of your interest in data science. He'll supply the money, and you'll supply models that predict how much various houses are worth. You ask your cousin how he's predicted real estate values in the past, and he says it is just intuition. But more questioning reveals that he's identified price patterns from houses he has seen in the past, and he uses those patterns to make predictions for new houses he is considering.

Machine learning works the same way.

Therefore, almost any task that can be completed with a data-defined pattern or set of rules can be automated with machine learning. This allows companies to transform processes that were previously only possible for humans to perform—think responding to customer service calls, bookkeeping, and reviewing resumes.

Techniques in Machine learning:

- Supervised learning allows you to collect data or produce a data output from a previous ML deployment. Supervised learning is exciting because it works in much the same way humans actually learn.

- Unsupervised machine learning helps you find all kinds of unknown patterns in data. In unsupervised learning, the algorithm tries to learn some inherent structure to the data with only unlabeled examples. Two common unsupervised learning tasks are clustering and dimensionality reduction.

3 Steps for ML models to work:

Step 1: Begin with existing data

Machine learning requires us to have existing data—not the data our application will use when we run it, but data to learn from, which gets used in development. You need a lot of real data, in fact, the more the better. The more examples you provide, the better the computer should be able to learn, as it will get to know about different patterns and how the output depends on them.

So just collect every scrap of data you have and dump it and voila! Right?

Wrong. In order to train the computer to understand what we want and what we don’t want, you need to prepare, clean and label your data. Get rid of garbage entries, missing pieces of information, anything that’s ambiguous or confusing. Filter your dataset down to only the information you’re interested in right now. Without high-quality data, machine learning does not work. So take your time and pay attention to detail.

It's similar to what you're experiencing right now, as you are going through this blog you are trying to learn but let's say if I go insane to add lots of thrash and irrelevant information, will it be a good learning experience for you?

It won't!

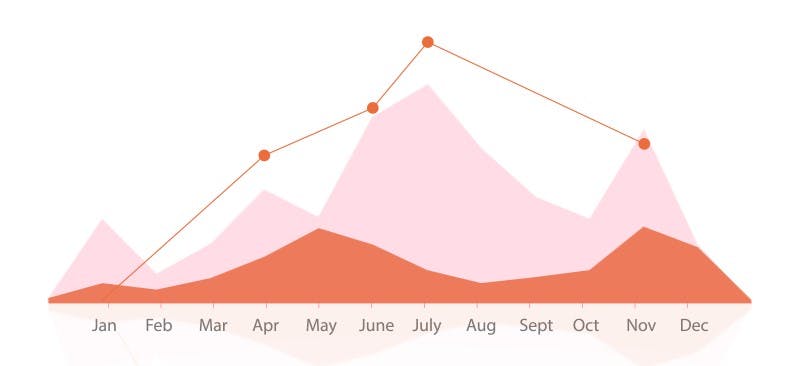

Step 2: Analyze data to identify patterns

Unlike conventional software development where humans are responsible for interpreting large data sets, with machine learning, you apply a machine-learning algorithm to the data. But don’t think you’re off the hook. Choosing the right algorithm, applying it, configuring it and testing it is where the human element comes back in.

There are several platforms or algorithms to choose from both commercial and open source. Explore solutions from Microsoft, Google, Amazon, IBM or open-source frameworks like TensorFlow, Torch and Caffe.

They each have their own strengths and downsides, and each will interpret the same dataset in a different way.

- Some are faster to train.

- Some are more configurable.

- Some allow for more visibility into the decision process.

In order to make the right choice, you need to experiment with a few algorithms and test until you find the one that gives you the results most aligned to what you’re trying to achieve with your data, but is doing a hit and trial method an efficient approach?

Of course no, so what we do is that we reduce the pool of algorithms to search in based on multiple factors such as :

- The number of data points and features

- Data format

- Linearity of data

- Training time

- Prediction time

- Business requirements & developer intuitions

Now, what do these algorithms do in general? Each ML algorithm has an optimization process as its heart, where they 'LEARN' and train their parameters based on the patterns available in the given data

So, again how does learning happen or what is the optimization process?

When we feed data into models to learn, the model tries to build a relation between the Target variable 'Y' and the patterns available in 'X'. Doing so it updates or trains its own parameter, such that in future when we give any similar pattern to the model it can use that trained parameter to predict the target class.

When it’s all said and done, and you’ve successfully applied a machine-learning algorithm to analyze your data and learn from it, you have a trained model.

Step 3: Make predictions

There is so much you can do with your newly trained model. You could import it into a software application you’re building, deploy it into a web back end or upload and host it into a cloud service. Your trained model is now ready to take in new data and feed you predictions, aka results.

These results can look different depending on what kind of algorithm you go with. If you need to know what something is, go with a classification algorithm, which comes in two types. Binary classification categorizes data between two categories. Multi-class classification sorts data between—you guessed it—multiple categories.

When the result you’re looking for is an actual number, you’ll want to use a regression algorithm. Regression takes a lot of different data with different weights of importance and analyzes it with historical data to objectively provide an end result.

Both regression and classification are supervised types of algorithms, meaning you need to provide intentional data and direction for the computer to learn. There is also unsupervised algorithms that don’t require labelled data or any guidance on the kind of result you’re looking for.

One form of unsupervised algorithm is clustering. You use clustering when you want to understand the structure of your data. You provide a set of data and let the algorithm identify the categories within that set. On the other hand, the anomaly is an unsupervised algorithm you can use when your data looks normal and uniform, and you want the algorithm to pull anything out of the ordinary that doesn’t fit with the rest of the data.

Although supervised algorithms are more common, it’s good to play around with each algorithm type and use cases to better understand probability and practice splitting and training data in different ways. The more you toy with your data, the better your understanding of what machine learning can accomplish will become.

Ultimately, machine learning helps you find new ways to make life easier for your customers and easier for yourself. Self-driving cars are not necessary